Revolutionizing AI Privacy: Apple’s Strategy for a Smarter, More Secure Siri

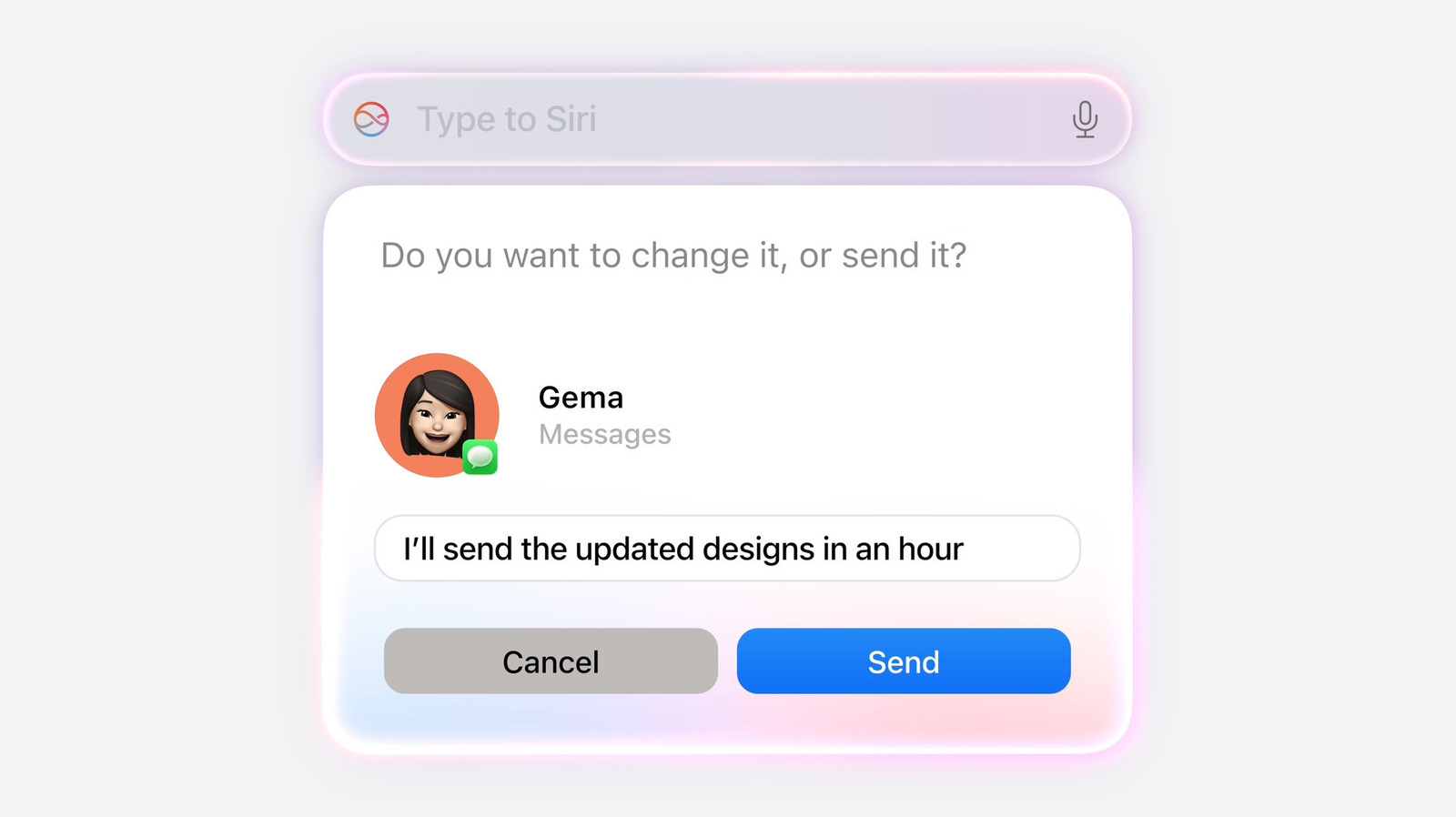

As the competition in the generative AI space intensifies, Apple is reportedly preparing a bold update for its virtual assistant, Siri. With the upcoming debut of a revamped Siri expected at WWDC 2026, industry reports suggest that Apple is doubling down on its commitment to user privacy, potentially setting a new standard for how AI models manage and store conversational data.

The Era of Auto-Deleting Chatlogs

According to insights from Bloomberg’s Mark Gurman, Apple is introducing a granular control system for Siri’s chat history. Drawing inspiration from the disappearing messages feature in the native Messages app, the revamped Siri will reportedly offer users distinct options for data retention. Instead of an “all-or-nothing” approach, users may soon be able to toggle their chat history settings to automatically clear after 30 days, one year, or to retain data indefinitely.

Furthermore, users will gain greater control over the assistant’s contextual memory. The update is expected to include a configuration that determines whether Siri initializes with the context of previous interactions or starts every new query as a completely fresh session.

Privacy vs. Performance: The Trade-off

While these privacy features are a boon for security-conscious users, they present a strategic challenge for AI development. Most Large Language Models (LLMs) rely heavily on vast amounts of real-world user data to refine algorithms and personalize future interactions. By limiting the lifespan of chatlogs, Apple could theoretically slow the feedback loop that typically optimizes AI responses.

However, Apple is pivoting to a different approach. Reports indicate that the company is leaning heavily into synthetic data generation to train its models. By prioritizing privacy as a fundamental pillar of its AI architecture rather than an optional “incognito mode,” Apple aims to turn a potential performance disadvantage into a market-leading value proposition.

Why This Matters for the Tech Landscape

- Ingrained Security: Unlike competitors who treat privacy as an opt-in toggle, Apple is positioning these data retention controls as a default, ingrained aspect of the user experience.

- Liability Reduction: With AI chatbots increasingly becoming subjects of legal interest in criminal cases and civil litigation, Apple’s strategy provides a clear path for users to avoid the permanent storage of sensitive conversations.

- Competitive Differentiation: While companies like OpenAI offer “Temporary Chat” modes, Apple’s systemic approach underscores its “Privacy First” branding, distinguishing it from rivals that utilize user data for iterative model training.

The Road to WWDC 2026

As we approach the start of WWDC on June 8, 2026, the tech community is watching closely to see how these privacy features will integrate with the overhauled Siri. If Apple successfully balances robust AI performance with these strict data policies, it could establish a new blueprint for how Big Tech handles the delicate balance between artificial intelligence advancement and individual digital sovereignty.