Revolutionizing the Virtual Assistant Experience

As the artificial intelligence race intensifies, Apple is preparing a strategic pivot that underscores its long-standing commitment to user privacy. According to recent reports from Bloomberg’s Mark Gurman, the upcoming overhaul of Siri—expected to be unveiled at WWDC 2026—will introduce sophisticated chat management tools that put the power of data retention firmly in the hands of the user.

Customizable Retention Policies for Your Conversations

The core of this update focuses on granular control over chat history. Unlike current industry standards where conversational logs are often stored indefinitely to facilitate model training, the revamped Siri will reportedly offer users specific windows for data persistence. Based on the leaked details, users will be able to configure their Siri settings to:

- Automatically purge chat logs after 30 days.

- Retain conversations for up to one year.

- Keep a permanent record of interactions, if desired.

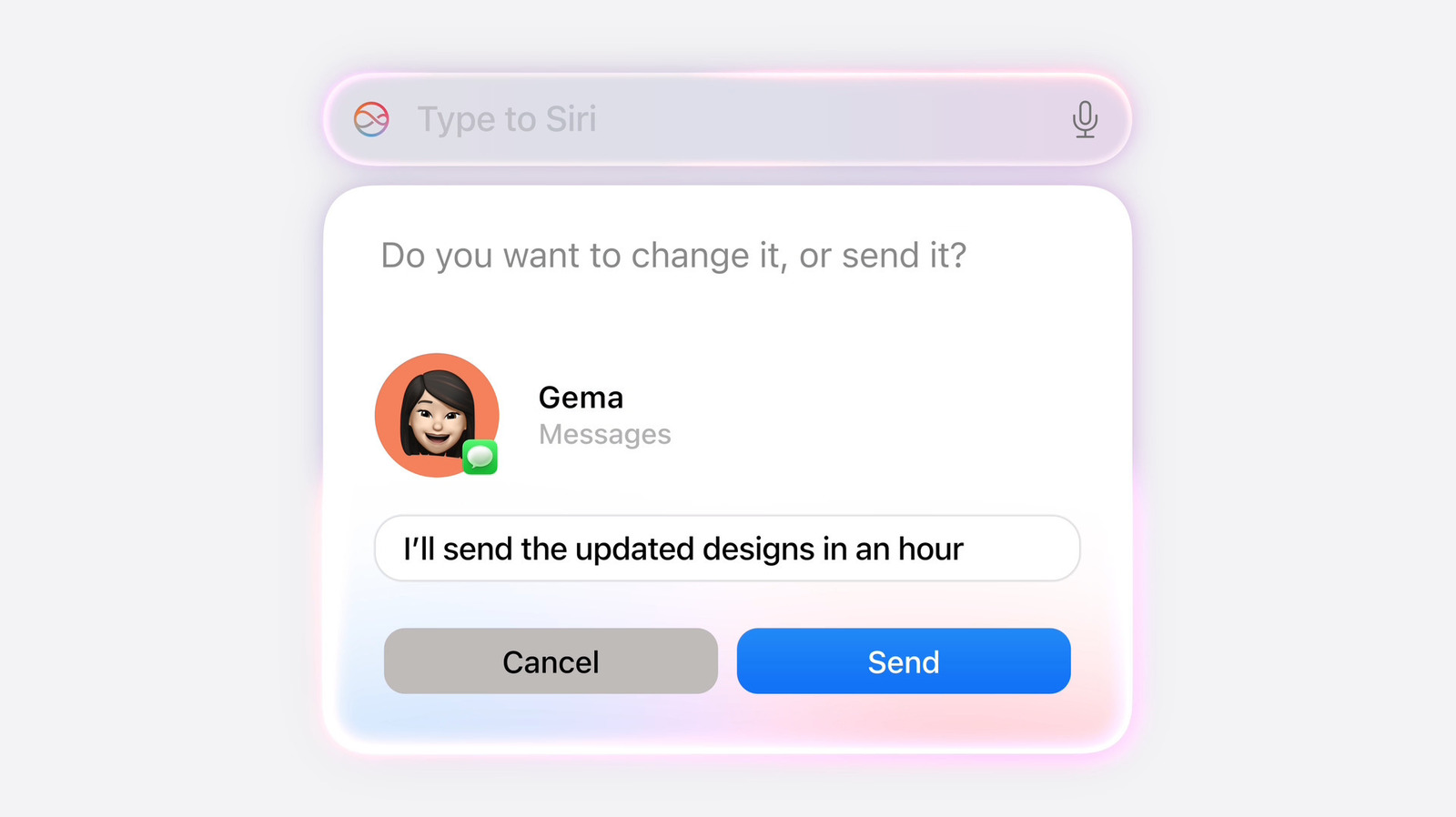

Beyond retention, Apple is introducing a functional toggle for context awareness. Users will gain the flexibility to decide whether Siri initializes new sessions with the context of prior conversations or opts for a ‘clean slate’ start, enhancing both utility and privacy.

Privacy as a Competitive Differentiator

In an era where Large Language Models (LLMs) thrive on vast swaths of personal user data, Apple’s approach is notably contrarian. While competitors like OpenAI and Google often leverage user interactions to refine and tune their models, Apple has signaled a shift toward synthetic data generation. By relying on artificial datasets rather than real-world user logs for training, Apple is turning a perceived disadvantage—slower model iteration—into a robust marketing pillar for privacy-conscious consumers.

This philosophy stands in stark contrast to the ‘incognito’ or ‘temporary’ modes found in other AI ecosystems. While many companies treat privacy as an optional, opt-in feature, Apple’s internal stance suggests that these safeguards should be baked into the foundational architecture of the operating system.

Why This Matters for the Future of AI

The implications of this strategy are significant. As AI chatbots are increasingly scrutinized for their roles in legal discovery and data mining, Apple is positioning itself as the ‘safe harbor’ for sensitive information. By embedding strict deletion protocols directly into the system, Apple is effectively mitigating the risks associated with data leaks and unauthorized surveillance of private conversations.

Anticipation Builds for WWDC 2026

With WWDC 2026 scheduled to kick off on June 8, the tech community is eager to see how these privacy features will integrate with the broader iOS and macOS ecosystems. The new Siri represents more than just a software update; it is a clear declaration that for Apple, the future of AI is not just about intelligence—it is about integrity. As we move closer to the June debut, the question remains: will the market prioritize data-hungry models, or will consumers flock to the privacy-centric design that Apple is building?